The use of conversational devices and smart speakers has been increased in the past years. In this article, we will talk about conversational interfaces and introduction to interaction flow, natural language understanding, and deployment.

Let’s learn what happens behind the technology of conversational artificial intelligence.

Building and intending corporate-level skill for a conversational device includes three major components-

- Interaction Flow – The interaction flow component includes defining and building the interaction in which the users are going to have to attain a business goal, or get answers to the queries or troubleshoot.

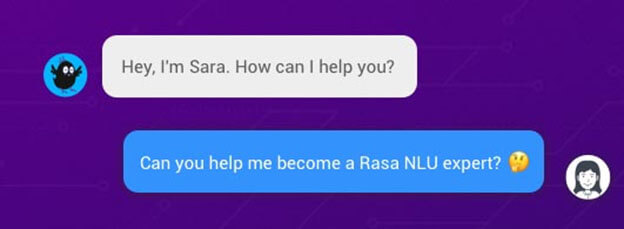

- Natural Language Understanding (NLU) – NLU component makes the bot understand and enables it to respond in natural language and involves things such as slot filling, intent classification, semantic search, sentiment understanding, question answering, and response generation.

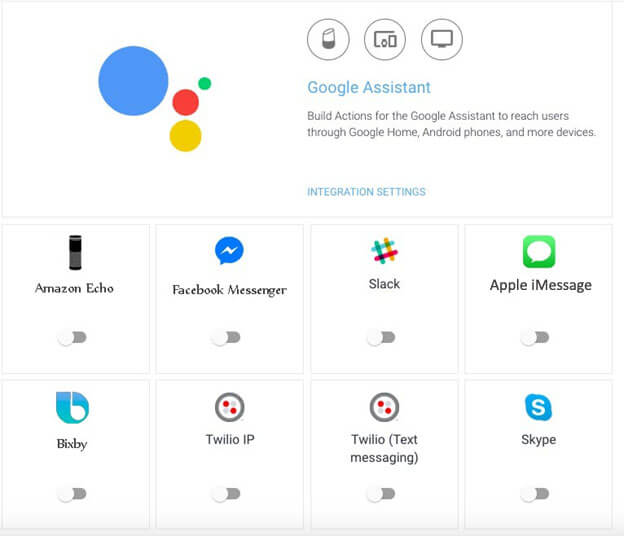

- Deployment- Once the defining, building, and addition of NLU to the interface is completed, we need to deploy this to several channels. Amazon Echo, Microsoft Cortana, and Google Home are a few voice interfaces that can be integrated into the website. Along with this, messaging channels like android business messaging, Facebook messenger, and even chatbots can be integrated.

Interaction Flow

Interaction flow acts as a mind map of the interaction that happens between a bot or conversational interface and the user. To develop an interaction flow, it is suggested to design the interaction flow to ease the building process.

What things do we discover while designing conversational flows?

Here we got top three lesions while intending conversational flows-

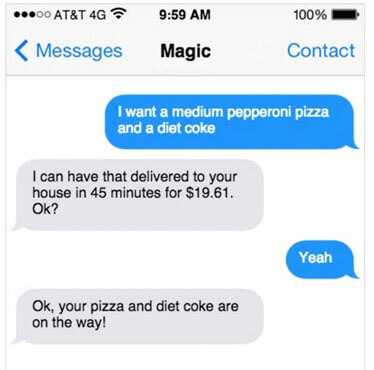

More conversational and personable flow

An intelligent chatbots is the one that doesn’t talk like a robot. Instead of repeating the same text, a bot could be configured to use random sentences from a list. Another critical thing is to provide personality to the conversational interface. People are talking to the bot and you can make a difference by make it more personable like – “Hello dear, hope you are having a great day. What can I do for you today?”

Knowledge of the context

It is not just the previous user message which makes the bot attentive for a response; context also plays a significant role. The context has several things like past conversations between the user and conversational interface, platform modality (voice-based or text-based), the experience of a user with the product and the whole workflow stage in which that the user is.

Handling error cases with grace

No bot knows the world completely and hence, mistakes are expected. You can minimize or eliminate those mistakes with these following tips-

- Get confirmed about the conversation made by the user till now

- Request for clarification in case bot needs extra details

- Let the user know that the bot is unable to understand the text, with grace.

Leveraging intelligent automation consulting can help organizations minimize these errors and optimize their chatbot's performance through advanced AI techniques and continuous learning algorithms.

Building blocks of Conversational Workflow

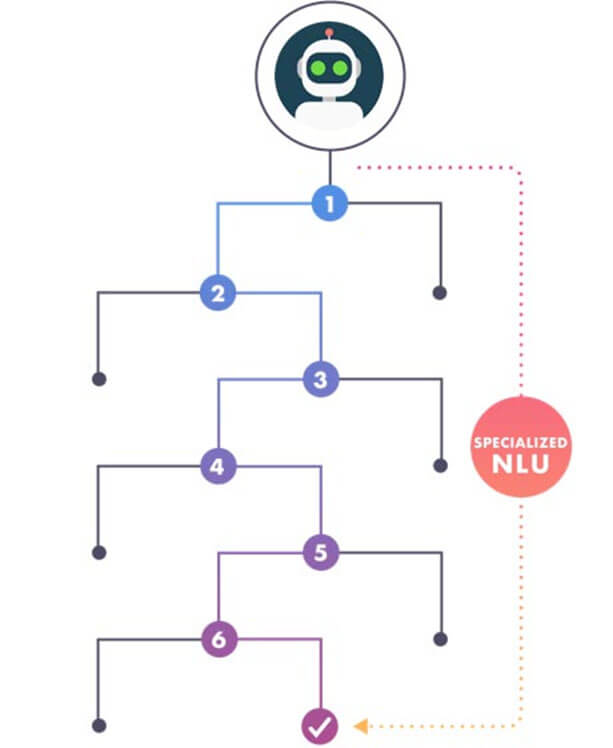

You can build the conversational workflow with abstractions like intent, variables, decision trees for troubleshooting, webhooks for calling an API, and knowledge base for answering queries. Let’s discuss these building blocks in detail-

A. intent

It is the basic building block used for the conversational interface. The intent is used for understanding the meaning of the messages sent by the user. It could have keywords, variables, and webhooks to run an action.

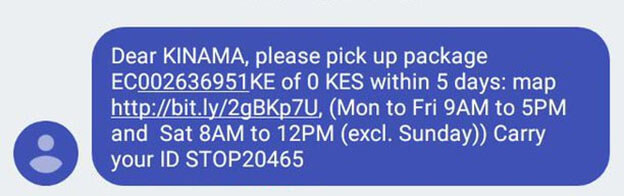

B. Variables

Variables explain the input received through the user to perform the intent. The below image is an instance. In this example, the user gets a package number that he needs to pick up. He also got an ID number which would be used for verification. Once the intent is identified, and all necessary variables are received, the action is performed.

C. Decision trees

Most of the use cases need troubleshooting, need to make specific decisions and respond to how users are sharing their details. Decision trees are used for solving this kind of issue.

D. Knowledge Base:

Most of the conversational agents are responding to user queries (in case of customer service). With a knowledge base, bot just can input FAQs and able to find the right answer to the queries of the user.

Natural Language Processing (NLP)

NLP is vast, with core elements like slot filling, intent classification, semantic search, and dialog tracking. An AI development company may focus on basics such as deep learning, embeddings, and LSTM to build effective conversational AI systems.

A. Deep Learning

Deep learning is advanced machine learning that doesn’t need handcrafting like traditional one. In deep learning, raw input is represented as a vector and the model grasps several interactions between the elements in raw input to tune the weights and respond predictably.

This technology is used for more number of NLP tasks like text clarification, language translation, information extraction, ai text to speech etc.

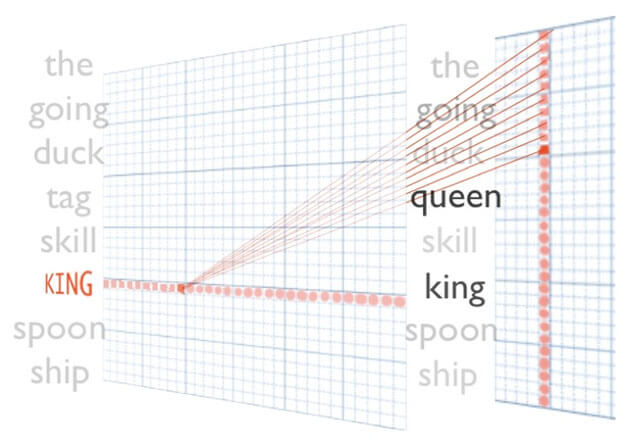

B. Embedding

Embedding is the initial step in any deep learning app for NLP that is used for converting sentences to vectors. There are mainly four types of embedding –

- Non-contextual word embedding

- Contextual word embedding

- Subwordembedding

- Sentence embedding

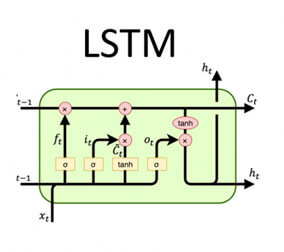

C. Long-Short Term Memory Network (LSTM)

RNN or Recurrent Neural Network is the second most significant technique in deep learning apps for NLP and Long-Short Term Memory Network is a variant of RNN. LSTM is successfully used for several supervised NLP tasks like text classification.

Deployment

There are numerous conversational platforms today- Amazon Echo, Google Assistant, Apple iMessage, Facebook Messenger, Slack, traditional & Dynamic IVR solutions, and more. Several platforms bring more nightmares for developers to build individual bots to work on these several platforms. Partnering with an experienced AI solutions provider can significantly alleviate these challenges by offering expertise in multi-channel integration and scalable deployment strategies.

There are two things that developers should remember for mobile application deployment services –

- Bot versioning – versioning allows you to roll back your changes and access the number of deployed changes.

- Bot Testing – This type of testing is not trivial since the response of bot depends on user message and context. Developers need to test the sub-components too for simpler debugging and speedy iteration.

Conclusion

In this article, we have shared the basics of building blocks of intelligent conversational interfaces. In the future, these interfaces will be much more presentable and humanly. Users will be able to get precise and immediate answers from the conversational interfaces in the coming future. Trust us, developers are working harder on it than you could imagine!